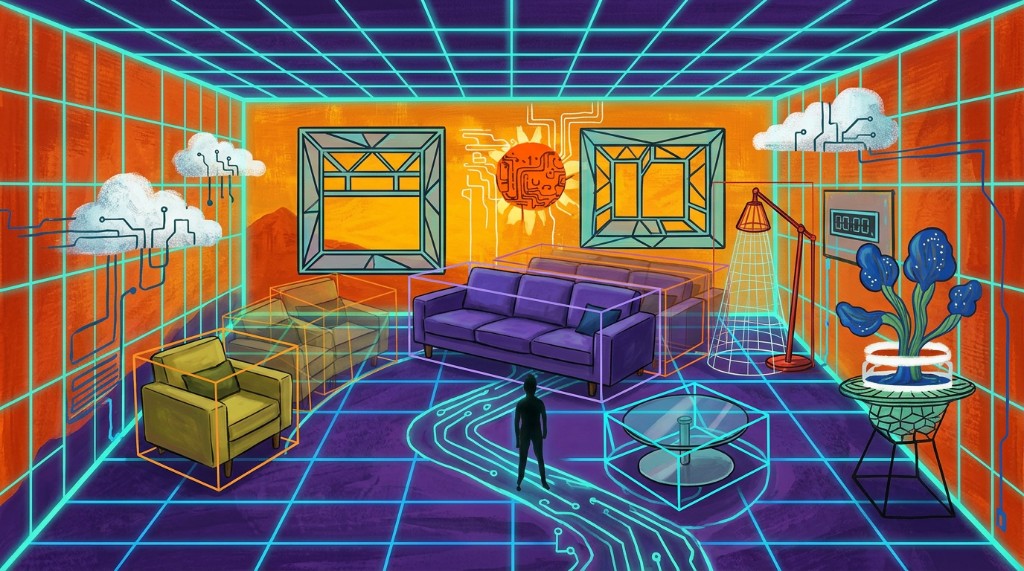

A room photo became the starting point for personalized furniture recommendations that fit the actual space, style, and lighting conditions of the customer.

We built a retail AI system that could analyze a room from a single photo, infer layout and depth, retrieve relevant furniture from live catalogs, and render replacement pieces back into the scene with believable scale, perspective, and lighting. To make that possible, we combined computer vision, monocular depth estimation, multimodal retrieval, and custom 3D generation under a very aggressive timeline. The result gave Palazzo a practical RAG retail system for AI product discovery, 3D scene understanding AI, and photorealistic, shoppable room transformations.

We've also built this as a serious retrieval and spatial-computing problem, combining RAG-style retrieval, computer vision, and 3D scene understanding to make product discovery feel natural, visual, and commercially useful.

- Computer Vision

- Monocular Depth Estimation

- Recommender Systems

- 3D Mesh Generation

- Photorealistic Rendering

- Custom Hosting

- Retail

- Spatial Computing

- 3D Commerce

- Palazzo

Vision

The goal was to let someone snap a picture of a room and immediately receive better furniture recommendations from real catalogs, not generic ideas. Each suggestion had to be appropriately sized, styled, and placed inside the actual room so the experience felt like a believable design workflow rather than a novelty demo.

Challenge

Building that level of design intelligence meant more than object recognition. The system had to understand depth, perspective, scale, and aesthetic fit while blending 2D catalog assets into 3D room geometry with realistic placement, rotation, and lighting.

Dreamers had to make the pipeline handle the awkward middle ground between technical precision and visual plausibility: the furniture had to be correct enough for the machine and natural enough for the human eye.

- Analyze and configure room layout from a single image.

- Build a usable catalog database from real furniture sources.

- Recommend pieces that matched both style and fit.

- Generate 3D meshes from 2D catalog images.

- Infer depth from monocular imagery through machine learning.

- Estimate pose and scale so replacements landed correctly in the scene.

- Blend new objects into the room with photorealistic results.

Obstacles

- Ridiculously tight timeline that required focused execution from day one.

- No hosting support from Palazzo, so Dreamers hosted the full project from development through production.

- Slow and expensive third-party 3D mesh tooling, which pushed the team to build an in-house alternative.

- A rapidly shifting technical landscape where state-of-the-art tools changed during the engagement.

- Missing room dimensions, which forced Dreamers to estimate geometry and scale inside the workflow.

- A key pose-and-scale model that initially took 300 seconds per run before optimization brought it down to about 10 seconds.

Under the Hood

Depth perception is especially difficult from a single image because monocular vision does not include the explicit stereo signals that humans use naturally. Dreamers addressed that by generating depth maps that encoded spatial relationships across the room, making it possible to reconstruct a working 3D understanding from flat imagery.

The system also needed to classify and mask individual items so the platform knew exactly which pixels belonged to which object. From there, Dreamers could reason about 3D bounding boxes, rotation, relative scale, and replacement geometry before blending catalog furniture back into the scene.

Example prompts in the document show how the product could respond to natural-language requests like modernizing a playroom sofa while keeping the approximate size, or opening up a crowded living area with a better furniture mix. The HTML version preserves that logic as a systems explanation even when the exact image sequences remain in the downloadable PDF.

Why It Was Hard

A sofa in a room sounds simple until the reference image and the room image disagree on camera angle, elevation, lighting, reflections, and distance. A naive drag-and-drop swap instantly looks fake.

Dreamers had to generate a new image that respected the room perspective, subtly scaled the replacement item with distance, and adjusted shadows and color so the result felt seamless rather than obviously composited.

Results

The delivered system set a stronger benchmark for interior-design automation and gave Palazzo a high-quality, fast-turnaround workflow that exceeded expectations. The platform made it easier for brands such as Decorator's Best, Ashley, and Arhaus to present furniture lines to customers in a richer and more intuitive visual environment.

Conclusion

The document closes by framing retail as part of a broader shift toward natural-language interfaces, personalized compute, and increasingly blended physical-digital experiences. Dreamers positioned the work not as a one-off retail demo, but as part of a larger evolution in how users interact with intelligent visual systems.

"I loved the founder's vision and technical understanding."